The purpose of our project is to describe the implementation of a “Multiple Mobile Robots” (MMR) that plans and controls the execution of logistics tasks by a set of mobile robots in a real-world hospital environment. The MMR is developed upon an architecture that hosts a routing engine, a supervisor module, controllers and a cloud service. The routing engine handles the geo-referenced data and the calculation of routes; the supervisor module implements algorithms to solve the task allocation problem and the trolley loading problem a temporal estimation of the robot’s positions at any given time hence the robot’s movements are synchronized. Cloud service provides a messaging system to exchange information with the robotic fleet, while the controller implements the control rules to ensure the execution of the work plan on individual robots. The proposed MMR has been developed to have a safe, efficient, and integrated indoor robotic fleets for logistic applications in healthcare and commercial spaces. Moreover, a computational analysis is performed using a virtual hospital floor-plant.

Project Proposal

1. High-level project introduction and performance expectation

Proposal:

The purpose of this paper is to describe the implementation of a “Multiple Mobile Robots” (MMR) that plans and controls the execution of logistics tasks by a set of mobile robots in a real-world hospital environment. The MMR is developed upon an architecture that hosts a routing engine, a supervisor module, controllers and a cloud service. The routing engine handles the geo-referenced data and the calculation of routes; the supervisor module implements algorithms to solve the task allocation problem and the trolley loading problem a temporal estimation of the robot’s positions at any given time hence the robot’s movements are synchronized. Cloud service provides a messaging system to exchange information with the robotic fleet, while the controller implements the control rules to ensure the execution of the work plan on individual robots. The proposed MMR has been developed to have a safe, efficient, and integrated indoor robotic fleets for logistic applications in healthcare and commercial spaces. Moreover, a computational analysis is performed using a virtual hospital floor-plant.

Introduction:

The constant technological development felt at present creates a need for a constant adaptation on the part of the industrial sector in order to fulfil the demands of the corporate market. To remain competitive, industries must generate a demand for new innovative solutions in an attempt to create value. These solutions do not always reflect a direct valorization of the final product. In most industries, the production costs have a significant impact in their market competitiveness, which leads to a significant evolution of the automated systems. These systems grant the possibility of reducing the labour cost and simultaneously optimizing production time. As a product of this evolution, Automated Guided Vehicles Systems (AGV) were created and saw their first use in an industrial environment in 1954. Since then, the use of this type of system has seen a steady increase and is a common sight in industry at present.

The coordination of a fleet of autonomous vehicles is a very complex task, most multi-robot systems rely currently on static and pre-configured interactions between the robots. When the unpredictability associated with communication flaws is added, this task becomes even more difficult. Due to this fact, the study of trajectory planning algorithms allied to methods of detection and mitigation of communication faults, has increased in the later years. These algorithms use the robot’s localization information, either predicted or measured, to control the traffic of the robot fleet, and attempt to plan safe and optimized routes in an industrial environment.

Performance Expectation: 70%

2. Block Diagram

Block Diagram:

3. Expected sustainability results, projected resource savings

Expected Sustainability Results:

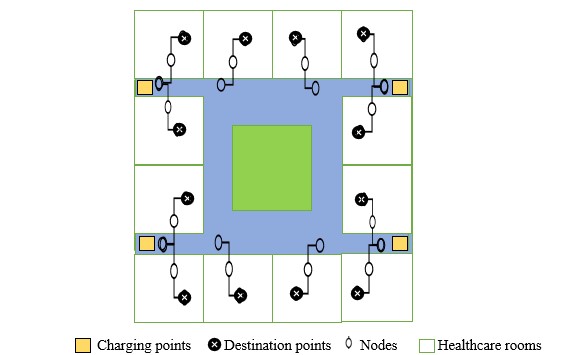

Consider a ‘O’ shaped healthcare block with 12 rooms in a floor.

Charging points: There are totally of four charging points. These are the points where the robots will come and get charged when there is no task assigned. The supervisor module code is written in such a way that the robot will move to the nearest charging point.

Destination points: As there are twelve rooms, we have considered twelve destination points. These are the points where the task is assigned. The supervisor module code is written in such a way that the nearest robot to the task will take-up the task assigned.

Nodes: There are totally twenty node points. These points act as the path to the MMR.

Healthcare rooms: We are considering totally 12 rooms in this project.

4. Design Introduction

Design Introduction:

The proposed MMR has been developed to have a safe, efficient, and integrated indoor robotic fleets for logistic applications in healthcare and commercial spaces. The mobile application which is developed on Dart language of flutter framework will become informative for doctors to analyse patients on how they are consuming the medicines and more importantly the family members can get all the updates on how the healthcare centre is well treating the patient. In the backend single FPGA is driving all the four mobile robots without any collisions and deadlocks by knowing MMR dynamic geolocations then having an instant route-plan given to four mobile robots.

5. Functional description and implementation

Function Description:

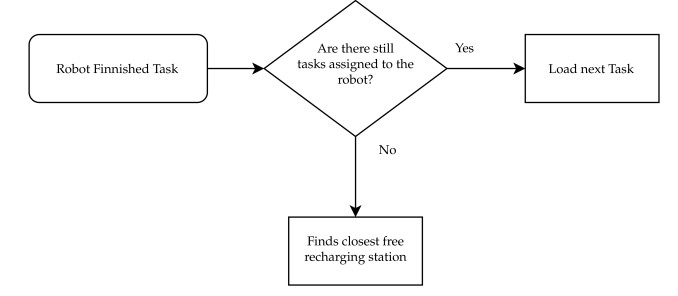

The Command is issued from the Mobile application to the FPGA Controller and then FPGA based on the robot availability will the assign the task to that robot.

Upon receiving the command from FPGA, the Mobile Robot will start executing the task by using shortest path approach.

Once the task is accomplished by that robot, it will acknowledge to the FPGA Controller that it has accomplished and waiting for the new task. If the task is not allocated to that particular robot it will return to the nearest charging station.

Mobile Application is implemented using Dart language of flutter framework.

Task allocation strategies are developed using Verilog Hardware Description Language to validate the design, intel Quartus Prime tool is used.

For Mobile Robots Task execution method is developed using Embedded C.

6. Performance metrics, performance to expectation

Performance Parameters:

The main Parameters ensured for this work is

1. Path Planning approach will be enhanced by developing proper navigation algorithms.

2. Self updation on task allocation methods from the central FPGA Controller.

3. Task accomplishment without colliding with the obstacles to ensure safe and cost-efficient navigation.

Intel FPGA usage:

As Intel FPGA has inbuilt SoC i.e. Processor so that we need not to have an additional controller to perform the task.

It has inbuilt Wi-Fi module so need not to have separate Wi-Fi module to connect cloud.

Intel is providing Azure IOT Platform it will be very efficient to use the service for our application.

7. Sustainability results, resource savings achieved

Design Archetucture:

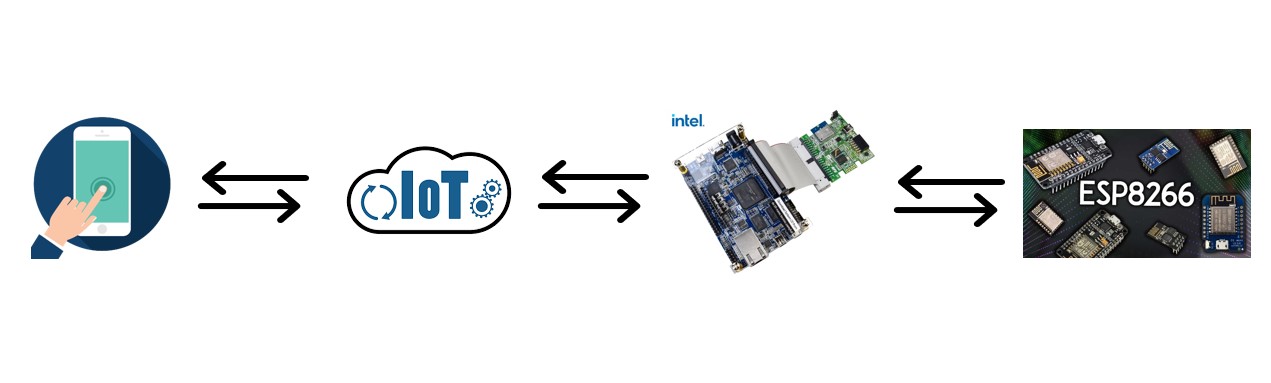

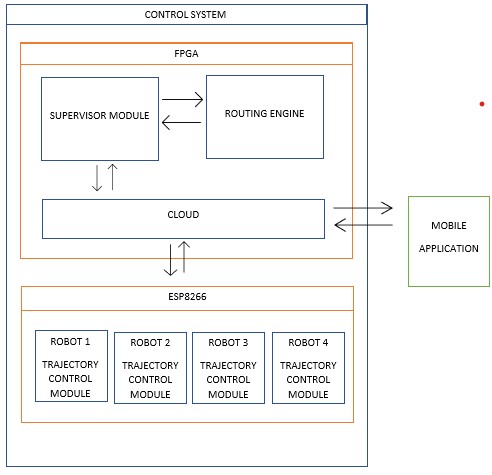

The environment is divided into two segments control system and mobile application. The control system is sub-divided into two categories FPGA and ESP8266 Wi-Fi module.

FPGA:

The central control unit uses the interaction between the supervision module and the modified routing engine, to control each robot planned path, this path is then transmitted to the robot. The robot control algorithm written in Verilog HDL is only responsible for controlling the velocities and direction of each of the robot’s wheels, so that it can accurately execute its assigned path. Routing engine Implements a Task Scheduling function; supervisor module is responsible for controlling when the robot’s paths need to be replanned and for detecting, measuring and handling communications faults and the cloud acts as a mediator between the FPGA and the Arduinos hence it gives the flexibility to check, how the tasks are being performed and update on the geolocations of the mobile robots.

ESP8266:

In this project we are going to implement four mobile robots each consists of a ESP8266. The ESP8266 will be having a trajectory control module written in embedded C language where it will perform the commands which we are getting from FPGA.

Mobile Application:

In this project we are going to implement a mobile application based on IOT will be developing on Dart language of flutter framework where the user can give the commands and able to view the updated information on the application itself [3].

8. Conclusion

0 Comments

Please login to post a comment.