FPGAs have the ability to enhance electromedical applications, including patient monitoring, ventilation, and other life sustainment applications. By using FPGAs and association analog connectivity devices, the provision of such electromedical care can be distributed and reduce the need for concentrated services and their associated transportation and other costs. This project seeks to apply FPGAs to these applications by exploiting integrated sensors and compute capability.

Project Proposal

1. High-level project introduction and performance expectation

FPGAs have the capacity to improve healthcare in multiple ways, such as improving processing times, enabling AI, providing reconfigurable capabilities, and allowing for fast implementation of hardware designs. For health sensing devices where size, weight, and power are essential, such as in rural or remote settings, combining these features can enable improved healthcare for many that may not have access to large healthcare infrastructure.

One particular aspect where FPGAs excel is in interfacing with sensors and processing signals. For healthcare, sensing is essential for early detection of diseases and other issues. In the proposed work, the FPGA will provide a computational and hardware interface for combining multiple sensors for health applications. The FPGA will allow for smaller device sizes that use less power.

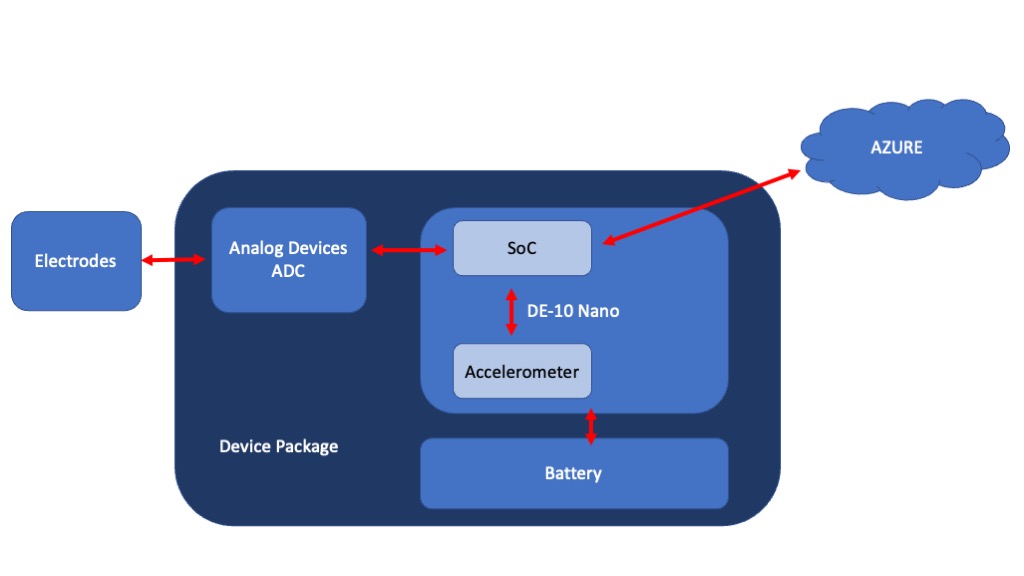

For example, machine learning can be performed on the device. The models can be trained using cloud infrastructure, such as Azure. Once the models are created, they can be sent to the FPGA platform to perform inference. By combining the cloud platform with the FPGA platform, each can be utilized for their best performance use case. The cloud platform can do the computationally intensive model training, while the FPGA can perform the less power intense inference.

2. Block Diagram

3. Expected sustainability results, projected resource savings

By providing health sensing in the field, less resources need to be provided for transportation of people and equipment. By using a multi-sensing platform, fewer single-purpose devices have to be created and distributed. By having an upgradable, future-proof device that can connect to the Azure cloud platform, devices can remain in service for longer periods of time.

Some examples of the savings include:

Reduced Travel Cost - Portable and distributed sensing platforms reduce the need for people to travel to healthcare centers or other places where fixed equipment is held.

Preventative Health - By detecting issues early, fewer resources can be used to treat condition before they become worse and become more expensive.

Reduced Complexity - By having a smaller device with multiple functions, the use of large, complex equipment can be reduced.

4. Design Introduction

5. Functional description and implementation

6. Performance metrics, performance to expectation

7. Sustainability results, resource savings achieved

8. Conclusion

0 Comments

Please login to post a comment.