EM016 » Augmented Reality with DLPs

The main goal of this proposal is to establish a working Augmented Reality application with a basic, but complete and platform independent, communication network. The proposed system uses an FPGA, ARM Linux, accelerometer/gyroscope, and DLP; to implement a medical application demo that shows a bone fracture over the human skin.

The proposal is to implement a system that projects a 2D image onto a surface in the real world. The appealing of the application is that object that receives the projection can be observed by many people around it. This application enhances the classical augmented reality technologies that are based on glasses/helmets, because the graphic or text data added to the reality can be observed by all the users in the field of projection of our system. The final implementation is a demo application oriented towards medical uses, but it can also be used for industry, telemetry, video projection, gaming, or similar applications.

Purpose: Augmented Reality (AR) and Mixed Reality (MR) applications are a current trend in technology and applications development. Even though there are affordable development kits for testing AR and MR, a complete demo application can provide an insight of what can be done with cutting-edge technology. The intention with this proposal is to show a medical application based on a modular system, which at the same time can be used as a base platform for a wide range of applications.

Applications: The scope of this proposal is to enhance the precision of medical operations by providing guidance by means of an x-ray projection over the patient’s skin. The projection will be fixed independently of the position of the projector, this results in a lot of flexibility in the operating room, which usually is a crowded place. Related applications are telemetry that involve moving user(s), but with a fixed displayed projection. For example, an operator that needs to fix a machine is guided by a digital projection provided by a remote qualified worker. Virtual navigation systems for simulation environments such as airplane or car training rooms. Video games that reveal images just when the projector is facing the right direction. The list is endless.

Target User: medicine professionals and students who want AR and MR as an aid to perform a superior job. Related users that need a base-ground for applications that deal with field programmable gate arrays (FPGAs), hard processor systems (HPSs), computer graphics, inertial measuring units (IMUs), digital light processing (DLP), inter-integrated circuit (I2C) communication, among others.

Design goals:

The core of this proposal is the Cyclone V SoC included on the DE10-Nano as this chip includes an ARM processor, an FPGA, while the board contains a handful GPIOs, Ethernet, USB, etc. that allows fast prototyping. The Quartus development software has valuable documentation, and demo examples provided by Terasic also increase the likelihood of success. The complementary boards are also provided by well known companies that provide good documentation as well.

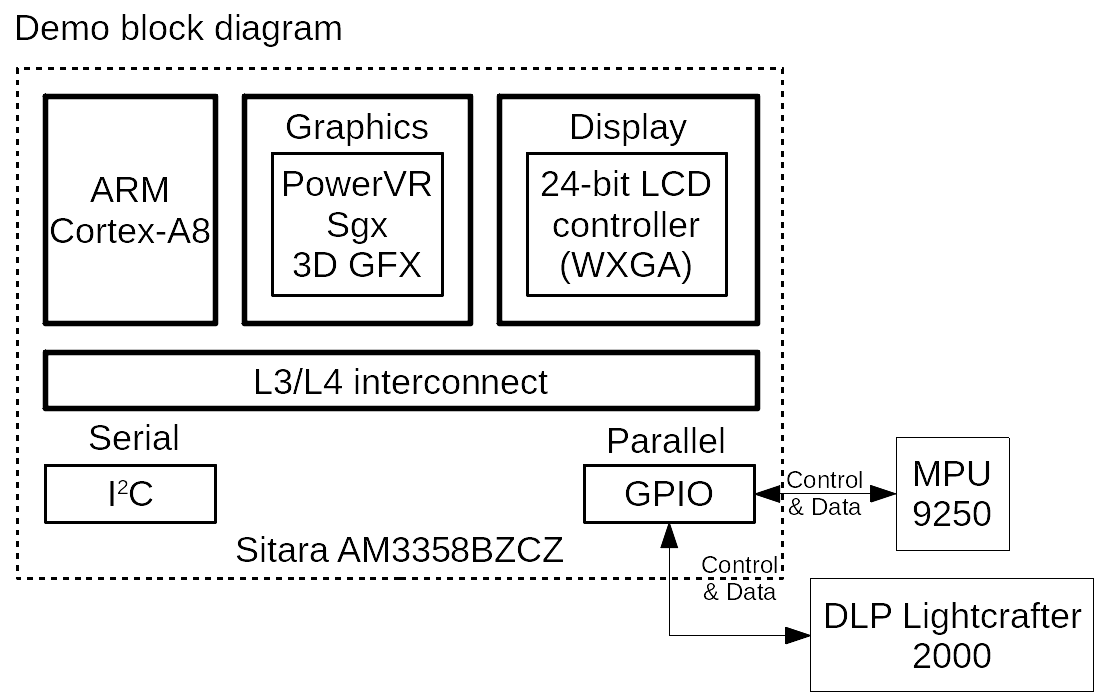

For this AR application, we use the DE10-Nano kit to implement the complete electronic data and control path. The architecture of the proposed solution is shown in the next figure.

This architecture is a refinement of previous work that allowed us to validate our approach, but that are either not portable or show less performance (reaction time to the IMU changes).

The first approach we took to implement a proof of concept with our available platforms was using Intel VIP (Video and Image Processing) IP implemented in a DE1-SoC FPGA part, the I2C bus peripheral at the HPS, and the DLP 3000 EVM thorough its HDMI input.

The second approach evaluated was done using a DLP 2000 EVM. This EVM has a more accessible GPIO from which we can directly control the DLP system through a BeagleBone Black. However this system relies on the GPU that is integrated in the BeagleBone Black's main processor.

The next photo shows the prototype implemented.

The final application has to implement image processing as fast as possible to implement the required rotation for the display projection according to the IMU data captured (also at the highest possible rate). Images are bi-dimensional arrays of pixels. Thus, vectorization can be used for parallel processing to obtain higher performance using the dual core ARM found on the Cyclone V SoC.

The use of Linux on the Cyclone V SoC allows reusing existing Linux applications as a starting point for the design (proof of concept) to later accelerate the system implementation by moving critical code to HW blocks. This is the process that led us to implement the video graphics on the Cyclone V SoC. The I2C IP can also be implemented on the FPGA fabric with the appropriate Linux drivers.

The key challenge of this project is the implementation of the real-time data-path that includes the communication between the IMU, the DLP, and the ARM processor running Linux. The I2C communication protocol is used by the IMU and the DLP. Previous work with the non-standard I2C connector found on Terasic boards resulted in unreliable communication and finally a damaged bus. For this reason, the I2C bus interface is going to be implemented to achieve a higher flexibility. This implementation can be extended to every application requiring an I2C bus, which is very used due to its simplicity and low cost.

Parallel processing architectures using state-of-the-art technologies on FPGAs is becoming a requirement when dealing with high-speed and high-throughput applications. When dealing with a DLP system, the image data is digital through all the process chain, meaning that the image is never an analog signal. This fact makes DLP technology highly compatible with the digital logic that can be developed using an FPGA. Given that DLP technology involves various research areas, it is important to mention that light sources and projection optics are not studied.

One important goal is to obtain a still projection using the angle of rotation that the DLP needs to compensate. To achieve that goal, we have worked with an IMU with 9DOF, the MPU9250 from Invensense. This device integrates an accelerometer, gyroscope and magnetometer (compass). Our approach to develop the control software is based on the creation of a MPU9250 class and an I2C class that uses the I2c Linux driver. The MPU9250 class contains all the low-level methods to set the configuration parameters and the data gathering of the IMU. The I2C class has been created to provide an easy communication with the I2C Linux driver. We conducted the hardware-software integration to our platform.

In linear algebra, a rotation in 3D Euclidean space can be described by a rotation matrix. It’s known that we talk about the roll, pitch and yaw to describe rotations in the x, y and z axis.

A point affected by such rotation is simply computed by multiplying the same point by the rotation matrix. The following matrices rotate vectors by an angle about the x, y and z axis.

There are six possible orderings of these rotation matrix. In our case, we are interested in the roll angle, so to obtain its value we used the acceleration given by the IMU, and a group of trigonometric identities, based on the atan2 function, to obtain the angle of variation of the roll. The rotation sequence Rxyz is used to eliminate the yaw rotation:

We can rewrite it to relate the roll φ and pitch θ angles to the normalized accelerometer reading Gp:

Solving for the roll and pitch angles and using the subscript xyz to denote that the roll and pitch angles are computed according to the rotation sequence Rxyz, gives:

The obtained value can be adjusted by selecting the right resolution of the IMU.

DE10-NANO to DLP

The DLP board was originally created to run physically on top of the BeagleBone Black. We have created a PCB adapter to connect the DLP with the GPIOs connectors of the DE10-Nano. The PCB routes the GPIO pins of the DE10-Nano to the DLP board pinout, and thanks to the FPGA routing flexibility, a custom mapping is provided to access the DLP board from the Cyclone V SoC domain.

The PCB design started with an schematic (using open source software kiCAD) based on the documentations provided by Terasic. After that, we used Altium to create the necessary files for the PCB fabrication.

The proposed framework begins by establishing a communication channel to transfer image data between the HPS and the FPGA using an internal bus in the Cyclone V SoC. The data obtained from an image file is handled accordingly by the custom logic deployed in the FPGA fabric. The custom logic includes VGA controller, and a small buffer for the 24-bit data required by the DLP.

The HPS logic and FPGA fabric are connected through the Advanced eXtensible Interface (AXI) bridge. The Qsys tool inside Quartus allows the creation of a system with this type of connection and allows the HPS to access common digital blocks such as PIO, memory, PLLs, etc. For this research, the system uses a PIO for the data transfer and the control signals that need to be sent by the HPS to the logic implemented in the FPGA fabric.

A video is basically a display of moving visual media. For a human eye, the video refresh rate must be at least 30 visuals per second to make the motion appear to be continuous. As a result, display circuits needs to at least display 30 frames (screens) per second. To reduce flickering, display technologies usually have a much higher rate, and for this implementation, the FGPA controller is expected to have an average value of 60 frames per second.

![]()

To use the hardware I2C support peripheral found on the HPS, a HPS to FPGA mapping was required. The compilation of the Intel guide produced the following result.

Using an ATA(IDE) cable that we had in hand (about 50 cm) proved that input signals of the DLP EVM are very sensible to noise, specially the data signals. Afterwards we used a shorter cable that we wade resembling the ATA(IDE) with a length of 10 cm, and it worked.

While creating ground planes on the PCB software, the connections of 5 pins were deleted and our team did not notice until the PCB was fabricated, as a result the PCB was missing important data signals so 5 jumper wires had to be soldered.

It was difficult to attach the PCB as the height of the Arduino header of the DE10-Nano is taller than the common female headers that can be attached to the GPIO.

It was found that the DLP 2000 EVM has a data pin map that is customized for the BeagleBone Black. The documentation provided by Texas Instruments is rather vague about this topic, and if careful attention to the schematics files of the board is taken, it can be noticed that the colors are not mapped according to the DLPC2607 datasheet, but rather to the BeagleBone Black 24bit LCD, which is clearly stated as an erratum in the BeagleBone wiki.

It was found that the DLP controller (DLPC2607) has an internal video-graphics processing block and frame memory controller that is attached to a DDR DRAM, so in our project we just implemented the VGA controller to leverage the workload from the ARM processor, while leaving the memory handling to the DLPC2607.

The HPS-FPGA examples provided by Terasic proved that the ARM processor can send bit signals to the FPGA blocks. However, it could not be implemented on time for our project.

The MPU9250 class and the I2C class proved to be working in Linux.

The LXDE distribution provided by Terasic uses the Intel Video and Image processing suite for the computer graphics processing though HDMI. The DE10-Nano Rotation Correction in Real Time application proved to be working from the Linux side by plugging the HDMI connector of the DE10-Nano to the HDMI input of the DLP-3000 EVM.

After following the AN 706: Mapping HPS IP Peripheral Signals to the FPGA Interface, to connect the I2C HPS to the FPGA fabric, we didn't succeed when configuring:

Preloader and U-Boot Customization

Generating and Compiling the Preloader

Booting Linux Using Prebuilt SD Card Image

Git was used as the version control software for the project, and GitHub was used for storing and sharing code. The .sof files for FPGA configuration have been uploaded, but the binaries for Linux were not. However, the code files available contain makefiles that allow easy compilation to obtain the required Linux binaries.

At the beginning the system was designed with a memory to store the image, and a framebuffer architecture came up as this is the standard way to process computer graphics. However, in the end, a much simpler approach was taken as the DLPC2607 handles all the memory requirements. Using the FPGA in conjunction with the DLPC2607, a VGA controller was implemented with a simple 24-reg that works as a simple data buffer.

The ARM processor on the Cyclone V SoC runs at 800 MHz, while the VGA controller implemented runs at 25 MHZ. At first sight it seems that the ARM processor is way faster, however, the implemented VGA controller change a pixel value after 40 [ns]. Running Linux on a processor is way more complex than changing pixel values, therefore, it is needed to create an ARM-Linux program to test if its possible to change values after 40 [ns] consistently in Linux. On top of that, the application has to take into account other processes, and calculations, that the ARM processor needs to perform to achieve Real Time operation.

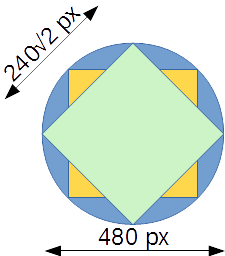

The cropping of the projection was an unintended but expected result, because the DMD used has a rectangular resolution. The maximum length value of the resulting projection is determined by the minimum value of the DMD resolution. On top of that, that value has to be considered as the length of the diagonal of a square, or as the diameter of a circle. The result can be considered as a guideline for UI design taking into account the maximum px values that can successfully be used for projection automatic rotation correction.

There are various layers that this projects addresses from high level UI applications with object oriented programming, to low level FPGA coding. The toughest layer that the team faced was the Linux Kernel where the high level software layer connects with the low level hardware layer. Linux Kernel Compilation is a complex process that could not be accomplished during the development of this project. There are many low level directives that require deep knowledge in how to handle memory while Linux is running. This also applies for any type of graphics handling from the Linux side. The routing and validation of the Cyclone V SoC correct operation was also complicated and HPS blocks to FPGA mapping was not accomplished.

It has been found a huge difference between software and hardware coding. The amount of examples for simple applications is vast for software, and on top of that, standardization has been greatly followed so that code can be widely shared. On the other hand, as hardware systems start to get complicated, the use of closed IPs is common, but the problem is that the flexibility or study of the IP is very limited. As an example is the 255 pages user guide for the Video and Image Processing Suite that provide many functionalities that are not needed in this project, that's the reason a simple VGA controller was implemented on the FPGA for this project. The problem rises when analyzing the examples to find the use of propietary IPs that can either be used or stop the progress. From an academic viewpoint that is counterproductive as teaching how to use VIP doesn't teach you how computer graphics works, and there are no examples using open source hardware designs.

This project implemented the application in the most standard form that it could, so that it can be run in any processor that runs Linux and has an I2C bus. At the same time, the FPGA configuration is given in VHDL so that it can be used with any vendor FPGA.

Create a simple 24-bit counter program on the HPS that sends the bits to the data input of the VGA controller implemented on the FPGA.

Create an I2C controller for the DLP 2000 EVM based on the I2C controller found on the HDMI_TX FPGA example provided by Terasic.

Create a basic Linux graphic driver to run X.Org and connect it to the VGA controller implemented on the FPGA.

[1] L. J. Hornbeck, "Digital Light Processing and MEMS: an overview," in Digest IEEE/Leos 1996 Summer Topical Meeting. Advanced Applications of Lasers in Materials and Processing, Keystone, 1996.

[2] G. Han and Z. Geng, "The application of FPGA-based reconfigurable computing technology in Digital Light Processing systems," in Proceedings of 2011 International Conference on Electronic & Mechanical Engineering and Information Technology, Harbin, 2011.

[3] D. Harris and S. Harris, Digital design and computer architecture, Amsterdam: Elsevier/Morgan Kaufmann, 2012.

[4] R. Tocci, N. Widmer and G. Moss, Digital systems, New Jersey: Prentice Hall, 2011.

[5] P. Chu, FPGA prototyping by VHDL examples, New Jersey: Wiley, 2008.

[6] P. Chu, Embedded SOPC design with NIOS II processor and VHDL examples, New Jersey: Wiley, 2011.

[7] B. Lee, "Introduction to ±12 Degree Orthogonal Digital Micromirror Devices (DMDs)," Texas Instruments, 02 2018. [Online]. Available: http://www.ti.com/lit/an/dlpa008b/dlpa008b.pdf. [Accessed 21 06 2018].

[8] Texas Instruments, "DLPC300 DLP® Digital Controller for the DLP3000 DMD," 08 2015. [Online]. Available: http://www.ti.com/lit/ds/symlink/dlpc300.pdf. [Accessed 21 06 2018].

[9] Texas Instruments, "DLP3000 DLP 0.3 WVGA Series 220 DMD," 03 2015. [Online]. Available: http://www.ti.com/lit/ds/symlink/dlp3000.pdf. [Accessed 21 06 2018].

[10] Texas Instruments, "DLP2000 (.2Nhd) DMD," July 2017. [Online]. Available: http://www.ti.com/lit/ds/symlink/dlp2000.pdf. [Accessed 21 06 2018].

[11] Terasic, "DE0-Nano-SoC Kit/Atlas-SoC Kit Resources," [Online]. Available: http://www.terasic.com.tw/cgi-bin/page/archive.pl?Language=English&CategoryNo=165&No=941&PartNo=4. [Accessed 21 06 2018].

[12] Terasic, "DE1-SoC Board Resources," [Online]. Available: http://www.terasic.com.tw/cgi-bin/page/archive.pl?Language=English&CategoryNo=165&No=836&PartNo=4. [Accessed 21 06 2018].

[13] Terasic, "DE10-Nano Kit Resources," [Online]. Available: http://www.terasic.com.tw/cgi-bin/page/archive.pl?Language=English&CategoryNo=165&No=1046&PartNo=4. [Accessed 21 06 2018].