Data Management

Data Management

Build SMTP relay with email scanner capability

AP001 »

SMTP can handle single or multi connection email sending request. SMTP relay fill forward to destination of email sender. Some attachment can put on email and require to scan before sending to public network. this a may have some unwanted files or executable that may danger to everyone recieved this email. this attachment may have some compressed file that may to extract and check before sending. This file probably may have DRM it before send

Transportation

Transportation

Smart Driving Assistance

AP002 »

Using OBD-II port of vehicles to fetch data from the vehicles and monitor different parameters relating to emission, forecast engine failures & recommend good driving practices to conserve fuel.

Water Related

Water Related

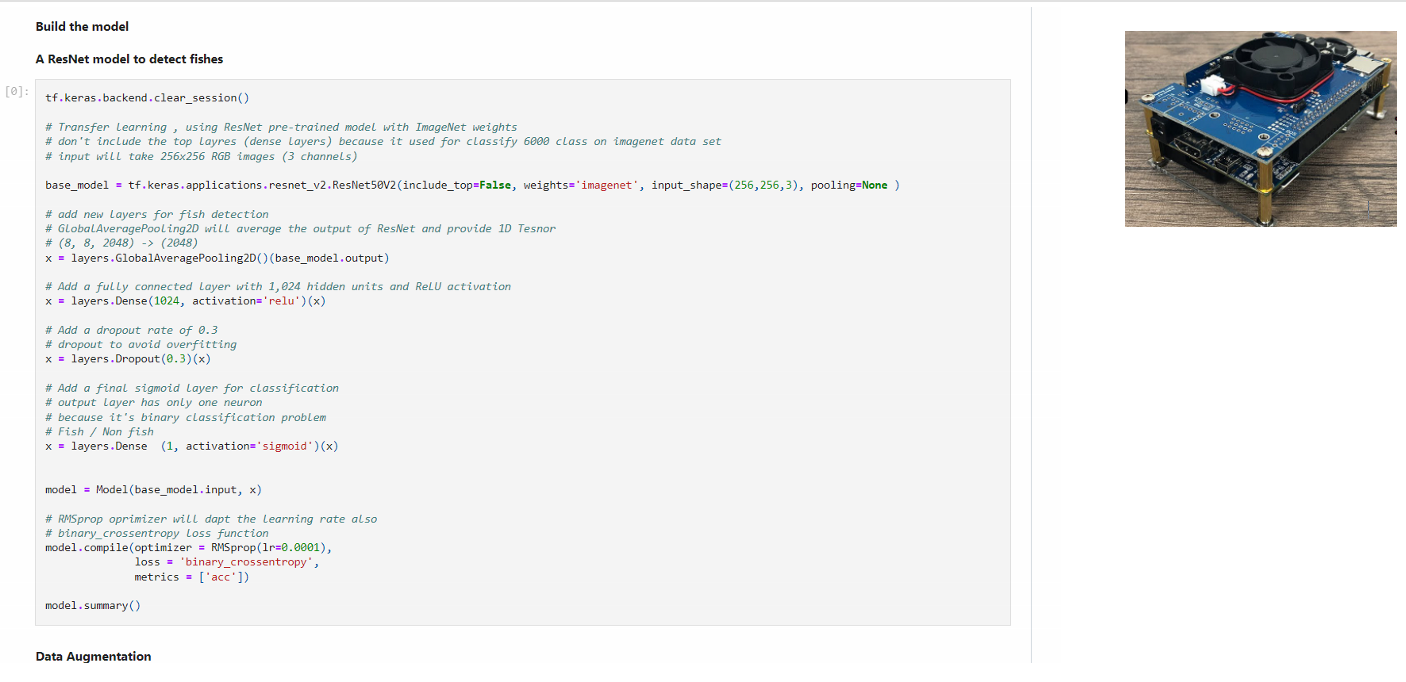

sustainable fishing

AP005 »

Our project is coming up with the cutting edge End-to-End product which can help the marine species and over a 5-10 years course wild capture would be rejuvenated naturally with the ultimate solution what we offer with the existing Hardware/Software but integrating and applying it for a unique way.

Other: Wildlife and forest preservation

Other: Wildlife and forest preservation

iFireFighter

AP006 »

Humanity is currently facing a very major problem, something that has the potential to drastically reduce our population and ruin the lives of our future generations: Climate Change. Due to increased intensity of climate change and lack of any meaningful effort to tackle it, the corresponding problems that accompany the phenomenon of climate change are worsening year by year.

One of these problems that has set the world ablaze are forest fires. The frequency, intensity, and area affected of forest fires are steadily increasing every year. It’s like every year the California wildfires or the Australian bushfires are more intense and cause more damage than the last year’s.

Once a fire has reached critical mass and spread beyond a certain limit, it’s extremely costly, time consuming, and takes a lot of manpower and effort to get it under the control. The natural remedy for tackling this issue is to nip the problem in the bud before it has a chance to blossom.

All major wildfires and forest fires start from a much smaller localized fire that once they reach a critical mass, grow out of control. Our project proposes to detect and alert the relevant authorities about these localized fires before they grow out of control.

Fighting a fire after it has grown past critical mass is extremely costly. From the equipment to the resources to the manpower and personnel, along with the potential for an immense loss of human life, a lot of money must be thrown at the problem to get the fire back under control.

Our project would reduce these costs massively since the focus would then shift from getting a raging uncontrollable fire back under control to quickly and efficiently extinguishing a much smaller fire before it spreads.

Smart City

Smart City

(Panotti's Ears) Real time Voice Analytics Co-processor

AP007 »

Speech recognition models have been used extensively on various platforms to provide ease of use, digital smart assistance, and hands-free control. At the cutting edge of this technology, the use of hidden Markov models is common. To improve the computational efficiency of hidden Markov model-based speech recognition systems, various techniques are used, amongst which the Viterbi beam search algorithm is one of the best. However, for large vocabulary speech recognition models with larger beam widths, the beam search algorithm’s sparse matrix operations create a highly constrictive bottleneck. Traditionally GPUs have been used to accelerate such models but with the algorithm's not so parallel nature, GPUs don’t provide an efficient solution and power constraints of IOT devices completely rule them out for Edge level.In this project, we research and formulate an FPGA based Co-processor (RTL level abstraction) to accelerate sparse matrix operation of the beam search algorithm so it can be used on edge devices to revolutionize how we interact with IOT edge level devices.

Other: Agriculture & Water Sustainability

Other: Agriculture & Water Sustainability

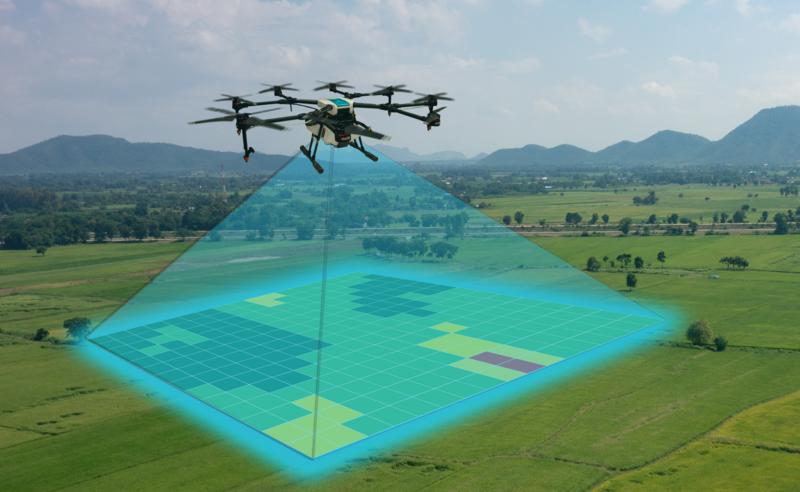

Water Stress Detection using Aerial & Metrological Data(Agri-Bird)

AP008 »

Water is essential in agriculture. Farms use it to grow fresh produce and to sustain their livestock. Major environmental functions and human needs critically depend on water. In regions of the world affected by water scarcity economic activities can be constrained by water availability, leading to competition both among sectors and between human uses and environmental needs.

According to a 2017-18 government survey, agriculture contributes to 18.9% of the GDP and uses up 42.3% of the labor force in Pakistan. But with agriculture using up about 90% of Pakistan’s water supply, and Pakistan’s water crisis threatening to exhaust the country’s water resources by 2040, there is a dire need for solutions that help in the efficient use of water in agriculture, and farming in particular.

To combat this problem and provide a sustainable mechanism to farmers, we propose an aerial collection and soil-sampling data framework that will lead to sustainable, precise, secure, and efficient farming. Our solution will focus on the water-stress or drought-stress of plants.

Water stress refers to the water deficit in plants and has shown to be a very useful piece of information in farming. In addition to being a good predictor for the yield of the plantation, water stress also allows us to respond timely to areas that are under-watered or over-watered. Of course, water stress is most valuable as information for planning irrigation, but it can also be a very decent measure of areas that are at risk of wildfires.

Our solution proposes to mount an FPGA to the aerial unit where it will be collecting data with the help of modules, subsequently process it on the edge, and then transfer all the relevant data to the cloud for further processing and analysis. In order to give our results more credibility, we will also be collecting some soil-sampled data and combining it with the aerial data to give us our final results in the cloud. Our results will aim to give accurate predictions, useful suggestions to farmers, routing data for irrigation channels, and warnings for risks and disasters.

Industrial

Industrial

FPGA based Wireless Sensor Networks

AP009 »

Wireless Sensor Networks (WSN) implementation on FPGA connecting multiple nodes with different sensors from analog devices and actuators to make industrial work more independent of human control physically. The data management will be made possible using Microsoft Azure cloud services, making the data accessible as and when required to the controller and the specifications can be tuned at admin end. This project is to make cloud based applications on FPGA and to explore new emerging technologies to make them system independent using FPGA.

Smart City

Smart City

Model-Predictive-Traffic-Mangement

AP010 »

We are proposing to build a hardware platform that's able to identify individual vehicles in real-time. The device will be connected to a real-time traffic model that's run on the cloud. Then by placing this device at a strategic location in a city, over time, we would be able to build a very accurate predictive traffic model.

Overall, we expect our project (hardware platform together with the back-end cloud-based software) will provide much better tools for city authorities to manage traffic than what's available today.

Other: Educational

Other: Educational

Logisim 2.0

AP011 »

Digital circuits design methods are considered as a fundamental knowledge base in Informatics, Engineering and Computer Science related study programs. This is because digital circuits constitute the basis of all the digital systems used these days. Knowledge of digital circuits is a basic requirement for the successful study and implementation of the complex technologies and systems, which are built around them. In courses devoted to the design of digital circuits, it is important that students are provided with the capability of verifying their designs with the corresponding experiments. This is particularly useful in teaching introductory engineering courses because the use of hands-on labs in the early years of the study suffers from restricted laboratory capacity and requires student training on the use of laboratory equipment and now due to this covid-19 pandemic it is not possible to visit physical library. Based on the above, the use of educational software tool Logisim together with cloud support provides significant advantages for the teaching of digital circuits, design concepts and computer architecture.

Transportation

Transportation

AI based data-driven approach and hardware accelerators (FPGA) to predict the SoC, SoH and RuL of the LiBs

AP012 »

The advancement in digitalization and availability of reliable sources of information that provide credible data, Artificial Intelligence (AI) has emerged to solve complex computational real-life problems which was challenging earlier. However, Artificial Neural Networks(ANN) need rigorous main processors and high memory bandwidth, and hence cannot provide expected levels of performance. As a result, hardware accelerators such as Graphic Processing Units (GPUs), Field Programmable Gate Arrays (FPGAs), and Application Specific Integrated Circuits (ASICs) have been used for improving overall performance of AI based applications. FPGAs are widely used for AI implementation as FPGAs have features like high-speed acceleration, low power consumption which cannot be done using central processors and GPUs. In Electric-powered vehicles (E-Mobility), Battery Management Systems (BMS) perform different operations for better use of energy stored in lithium-ion batteries (LiBs). The LiBs are a non-linear electrochemical system which is very complex and time-variant in nature. Because of this nature, estimation of States like State of Health (SoH) and Remaining Useful Life (RuL) is very difficult. The goal is to develop an advanced AI based BMS that can precisely indicate the LiBs states which will be useful in E-Mobility. This gives useful information for the prediction of when the battery should be removed or replaced and helps to optimize the battery performance and extend battery lifespan.

Industrial

Industrial

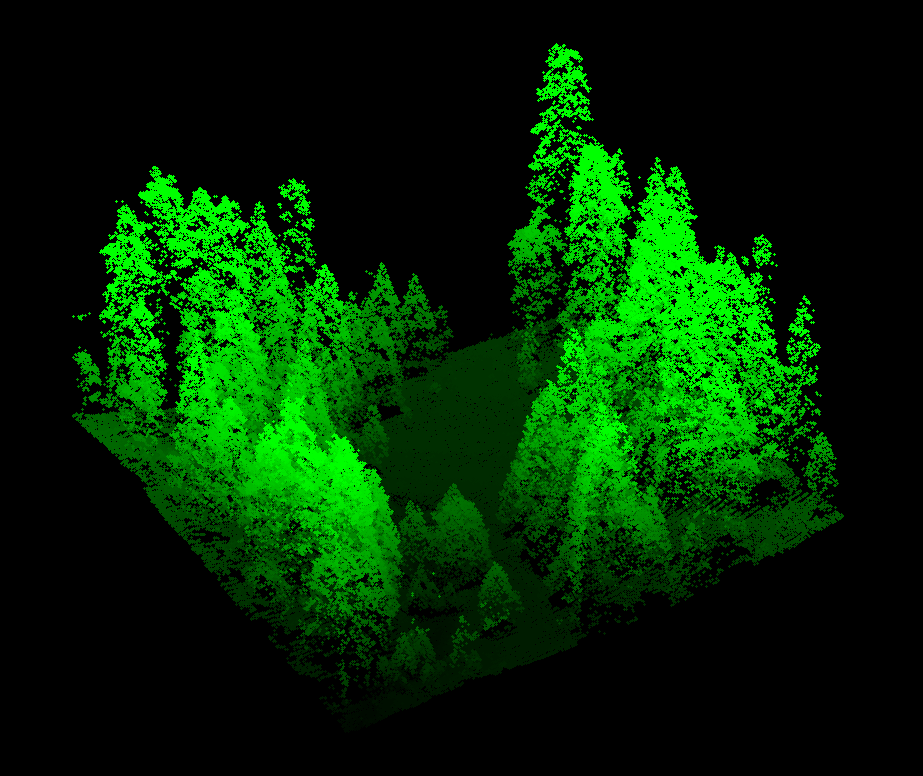

LIDAR

AP013 »

Laser imaging, detection, and ranging (LIDAR), is a method for measuring the distance to a targeted object in space. This works by aiming a laser at an object then firing pulses of light and receiving these using a light sensor next to the laser. The time it takes to receive the reflected light pulses can then be utilized to determine the distance of the object.

This project will utilize a laser that can be optically steered to aim in any direction. By scanning the lasers in every direction, a 3-dimensional image can be generated that gives a complete view of all surrounding obstacles. The ideal application for such a system is within self-driving cars, to detect other vehicles and pedestrians. This is also useful for 3d mapping either on land or underwater.

The Cloud Connectivity kit is ideal for this application as it includes an FPGA that is able to rapidly process the laser measurements in real-time which is essential for an application such as autonomous vehicles. The Wi-Fi connectivity combined with the Azure IoT application makes the platform even more powerful by allowing for results to be stored and processed further and then visualized to derive useful insights.

Water Related

Water Related

sustainable fishery

AP014 »

Our venture is coming up with the cutting edge End-to-End product which can help the marine species and over a 5-10 years course wild capture would be rejuvenated naturally with the ultimate solution what we offer with the existing Hardware/Software but integrating and applying it for a unique way.

Blind Fishing and overfishing has made the marine resources / wild capture as no longer a bottom less fishing.

This overfishing put a trouble to 1/3 of world population especially the under-developed and developing countries who rely ocean as their cheap protein.