Marine Related

Marine Related

A smart underwater microbial delivery system for coral reef habitat recovery

EM043 »

In this project, we propose the first underwater and deep-learning-enabled intelligent microbial delivery system for coral reef habitat recovery. The system will be able to deliver coral probiotics and monitor its efficacy. The delivery is precisely regulated by a deep learning network that monitors the color change of corals.

Other: SGP - Sustainable Agriculture

Other: SGP - Sustainable Agriculture

The Green Machine - Gardening for a Better Future

AP116 »

Our project aims to provide a smart, user-friendly, domestic mini-greenhouse management system to enable users to grow and efficiently monitor and tend to plants within their own homes. This encourages people to grow their own food plants by removing the difficulties related to gardening in the busy lifestyle.

Smart City

Smart City

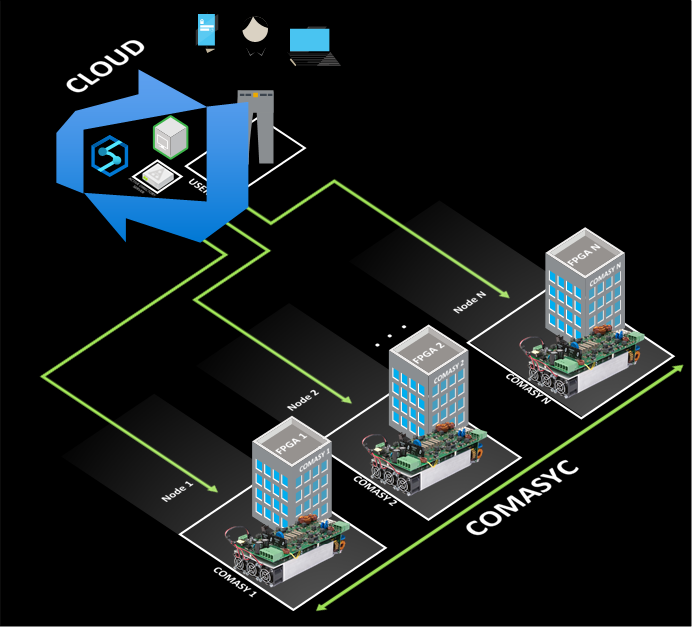

Converter management system connected to the cloud (COMASYC)

AS009 »

Energy is a priority for humanity, in that sense, green energies are a necessity to satisfy the energy shortage and preserve the environment. So electronic converters play a key role.

A converter management system connected to the cloud controlled by a FPGA is proposed for applications in industry 4.0. It has capabilities to integrate panels solar arrays (PVs), batteries, loads at the same time with different converters according to user requirements. That means that the proposed system manages modulation, algorithms, collect information, and distribute energy.

For example, if the user requires a PV, then the P&O MPPPT algorithm is implemented to obtain the maximum energy in the PVs.

On the other hand, parameters such as energy consumption and production of each node are important for the redistribution of energy, for this reason, this model has a single brain that is connected to each node. So, a novel control is proposed based on load balancing managed by the cloud for high-priority requests. The cloud is the single brain that controls the system and organizes the flow power among nodes. The project is focused on the Peruvian reality for places where energy has to be a priority.

Health

Health

MANASIK SWASTHYA YANTRAM : SoC based mental health monitoring and recommender system

AP049 »

Mental and behavioural problems are increasing part of the health problems the world over. The burden of illness resulting from psychiatric and behavioural disorders is enormous. Although it remains grossly under-represented by conventional public health statistics, which focus on mortality rather than morbidity or dysfunction. The psychiatric disorders account for 5 of 10 leading causes of disability as measured by years lived with a disability. The overall DALYs burden for neuropsychiatric disorders increased to 15% in the year 2020. At the international level, mental health is receiving increasing importance as reflected by the WHO focus on mental health as the theme for the World Health Day (4th October 2001), World Health Assembly (15th May 2001) and the World Health Report 2001 with Mental Health as the focus.

Let’s consider Covid-19 pandemic situation, opportunities to monitor psychosocial needs and deliver support during direct patient encounters in clinical practice are greatly curtailed in this crisis by large-scale home confinement. Psychosocial services, which are increasingly delivered in primary care settings, are being offered by means of telemedicine. In the context of Covid 19, psychosocial assessment and monitoring should include queries about Covid-19 related stressors (such as exposures to infected sources, infected family members, loss of loved ones, and physical distancing), secondary adversities (economic loss, for example), psychosocial effects (such as depression, anxiety, psychosomatic preoccupations, insomnia, increased substance use, and domestic violence), and indicators of vulnerability (such as pre existing physical or psychological conditions). Some patients will need referral for formal mental health evaluation and care, while others may benefit from supportive interventions designed to promote wellness and enhance coping (such as psychoeducation or cognitive behavioral techniques). In light of the widening economic crisis and numerous uncertainties surrounding this pandemic, suicidal ideation may emerge and necessitate immediate consultation with a mental health professional or referral for possible emergency psychiatric hospitalization.

We believe that Big Problems have Simple Solutions. If someone is always monitoring you and giving you timely recommendations/suggestions it can help in a very positive way. In this project we are trying to solve this problem with a lot of positivity which is actually very important in anyone's life. Our project consists of a device connected to human body which consists of sensors which monitor different parameters from human body, gas sensor inside the living room to monitor the pollution/toxic gases in the environment, a recommender system which uses the captured images of human/video and uses ML algorithms uses Cloud and Cloud connectivity kit to monitor human behavior. Data from sensors and machine learning algorithms are put together and timely recommendation is given to the people.

Autonomous Vehicles

Autonomous Vehicles

Drone package delivery safety in turbulent atomospheric conditions in confined areas like cities

AS038 »

We believe the wide adoption of drones to replace current CO2 emitting delivery vans will contribute to a significant reduction of carbon additions to the atmosphere. However, if drones are not able to be adopted safely this valuable reduction of carbon emissions will not be realized. Our project combines the technologies of an FPGA, embedded processor, analog sensors and cloud communications to enable the wide and safe adoption of drones to replace traditional CO2 emitting delivery systems.

We will demonstrate an FPGA + Processor + Sensor technology that enables a scout drone to detect atmospheric upsets such as turbulence generated around buildings in windy conditions or city thermals. Atmospheric upsets of drones can cause drone loss of control (LOC), collisions between drones, and collisions with buildings or people. The scout demonstration will send near real-time turbulence location data via the cloud to cargo drones to ensure safe delivery of packages with a reduced hazard to third parties.

The key technology of turbulence and upset detection for prevention of LOC has already been developed by Foale Aerospace Inc and has been developed as a solar powered sensor system that can be attached to flying vehicles without aircraft wiring or integration. We were awarded 3rd prize by the Experimental Aircraft Association Founders Prize competition to produce solutions to prevent Loss of Control, by expert judges at Air Venture 2021 at Oshkosh, Wisconsin.

We propose to add FPGA signal processing to improve performance, reduce detection times and reduce false positive signals from our system. We propose a cloud interface will allow near real-time (a few seconds latency) hazardous conditions detected by a light scout drone to change the flight path of a cargo drone and prevent a hazardous or unsafe outcome.

We have experience in Verilog, Quartus and Modelsim targeting a Terasic DE0-Nano with a Raspberry Pi Zero processor interface written in Python, as well as Yosys/Arachne-pnr/IceStorm toolchain for Trenz Icezero iCE40 FPGA. We have 30 years of programming experience with C and C++. We will use this experience to breadboard a flight system onto the Terasic-Intel-Analog Devices-Microsoft InnovateFPGA platform. Real flight data recorded during light drone aircraft flights in turbulent and calm conditions will be used to demonstrate the identification of atmospheric conditions that cause aircraft upsets using the InnovateFPGA platform as if it were mounted on a scout drone. Communication hazard bulletins via the cloud to the cargo drone will be demonstrated via a wi-fi link to a raspberry pi based processor mounted on a wheeled rover, to demonstrate hazard bulletin reception and responsive action by a cargo drone in flight.

Health

Health

CO2 gas sensor for air quality monitoring

EM034 »

CO2 gas sensors are rapidly gaining interest as a low-cost tool, not only for monitoring air quality inside buildings but also to assess the risk of infection by airborne diseases such as COVID-19.

We will develop a prototype system using the DE10-Nano development kit that will be based on a custom RISC-V microcontroller for the processing of the signals coming from the CO2 sensor. The system will be able to measure the CO2 concentration in the range of 400-5000 ppm.

Food Related

Food Related

ManGO!

AS019 »

IOT system for mango preservation using Microsoft Azure and FPGAs.

Food Related

Food Related

smart farm system

EM012 »

In this project, we will create an autonomous smart farm system by measuring the climatic conditions such as humidity temperature CO2 emissions then we will control the farm equipment such as water tank temperature inside greenhouse and Fire suppression system etc.

The system will be also controlled by the farmers using a website and a mobile app, the farmer can change the temperature, switch on and off the drip irrigation system etc. The website and the mobile app will provide a real time overview of the system so we can check the temperature, soil humidity… at any moment. In case of a failure in any part in the system the farmer will be informed by the website and mobile and an alarm is triggered.

The farm is also provided with a security system so when intruders try to enter the farm an alarm will be triggered and a SOS message will be sent to the farmer and the police

The energy to the system will be provided by a solar tracker panel

Smart City

Smart City

Automatic garbage classification management system

PR025 »

本项目通过图像识别检测医疗垃圾类别并对其进行分类投放。通过医疗垃圾分类尽量实现医疗废物再利用,节约资源,同时减少对环境的危害。

Smart City

Smart City

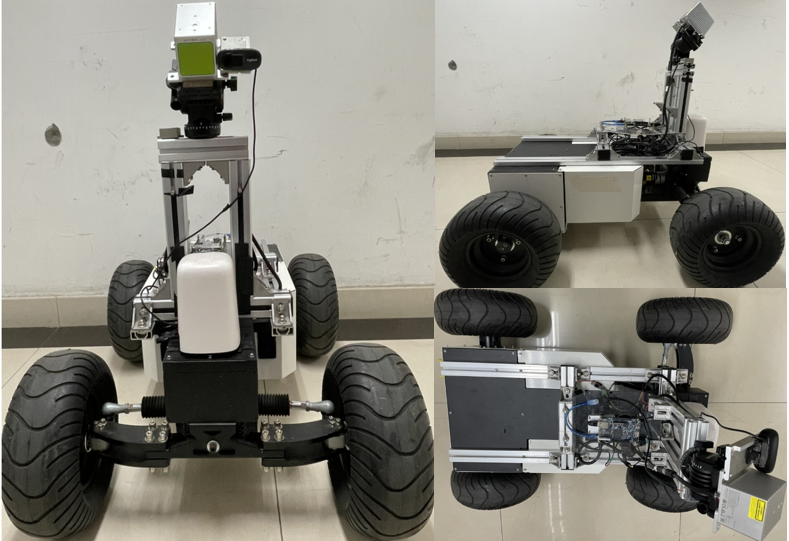

Intelligent Pavement Damage Detection System for Urban Roads

PR033 »

Pavement damage on urban roads not only affects the appearance of the road surface and the comfort of driving, but also easily expands to cause structural damage to the road surface and shorten the service life of the road surface. If it is not repaired in time, it will cause local accelerated damage to the road surface, cause serious traffic accidents, and cause a large number of personal injuries and economic losses. In order to reduce such injuries and realize the sustainable use of urban roads, reasonable and timely road maintenance and management are particularly important, and road damage detection is the primary task of road maintenance and management. Therefore, this project designs an intelligent road damage detection system for urban roads based on 3D lidar and cameras, collects and analyzes road data in real time, and gives the damage results objectively and accurately.

The intelligent road damage detection solution we conceive uses multi-sensor fusion and uses FPGA edge hardware to accelerate processing and upload to the cloud server instead of manual detection. It can display the GPS location of road damage in real time, as well as accurate detection results. In addition, the road surface can be spliced to form a vectorized map, and the road surface condition index PCI can be calculated according to the size and shape of the defect, and maintenance personnel can be notified to make timely repairs.

Other: SGP-Sustainable Agriculture

Other: SGP-Sustainable Agriculture

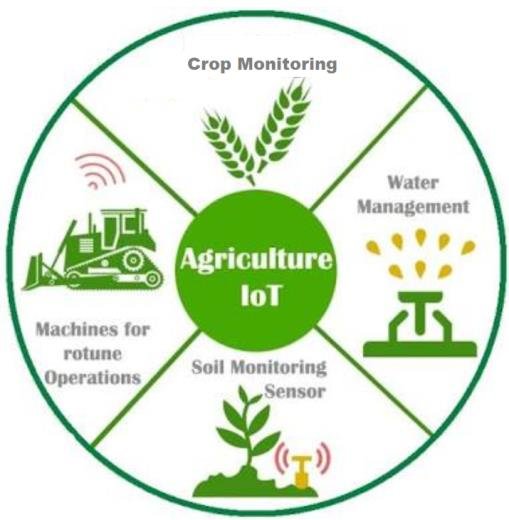

Smart and sustainable agriculture using FPGA

AP050 »

“Farm at ease” (Technology to farming):

To meet the growing population of the world, some serious reforms are required in the agricultural sector to ensure that our food system is ready to meet the upcoming challenges. This pushes us to shift from traditional/conservative agricultural practices to sustainable practices. Further, these sustainable practices are integrated with technological advancements, the major challenges of the agriculture/farming across the world can be resolved in an efficient and effective manner.

The following are some of the key challenges posed by the agricultural sector:

1. There is a considerable gap between the adoptions of technology in the agricultural sector when compared to the non-agricultural sectors especially in third world countries.

2. Mismanagement in crop planning without the proper analysis of soil.

3. Overutilization/underutilization of fertilizers.

4. Mismanagement in irrigation and thereby the water scarcity/wastage may occur.

5. Delay in identification of weeds/pests.

6. Unable to adopt the best practices of agriculture in the other regions.

Proposed solution:

To address above challenges, we are proposing for a design of a system (IOT based with Mobile/Web application development/Message alert system) which will aid the farmer in the following way:

1. Crop recommendation based on the soil condition, climate and water availability of that region.

2. Automatic irrigation system depending upon the crop requirement.

3. Recommendation of organic means of agriculture practice in place of fertilizers to the most possible extent.

4. Disease detection in crops using image processing.

5. Recommendation/alert system will be made in a simple manner and if possible, voice instructions will be given in regional languages.

Design idea:

The above-proposed idea will be designed initially as a real-time prototype device where FPGA board will be integrated with sensors such as NPK sensor, humidity sensor, temperature sensor, water level monitoring sensor, etc., Further, a communication shall be established between the FPGA board and mobile phone using FPGA virtues, Azure cloud, etc., This prototype device will be tested in a real-time agricultural field and based on the feedback/recommendations of the user (farmers) a robust system may be developed in future.

Water Related

Water Related

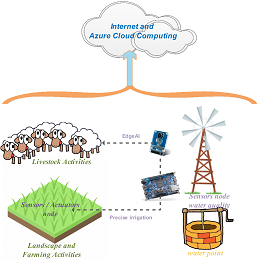

Smart water monitoring, protection of pastoral and agricultural areas on dry-lands

EM005 »

With climate change and water scarcity, arid countries' policies aim to conserve dams’ water for domestic and industrial use only. Due to a lack of budget, having no other alternative, Small-farms crop production turns to the use of innovative low-cost solutions for irrigation and livestock. The proposed project outlines a new approach supporting agricultural agencies and policies at all levels, livestock professionals, smallholding farmers, and local populations, to stabilize the ecologically unsustainable exploitation of the water on dry-lands. The proposed approaches aim to implement Edge Artificial Intelligence on Intel FPGA and Microsoft Azure cloud computing for the prediction of water quality and its evolution, manage an innovative irrigation process, livestock watering points, as well as artisan activities (pottery...).