Smart City

Smart City

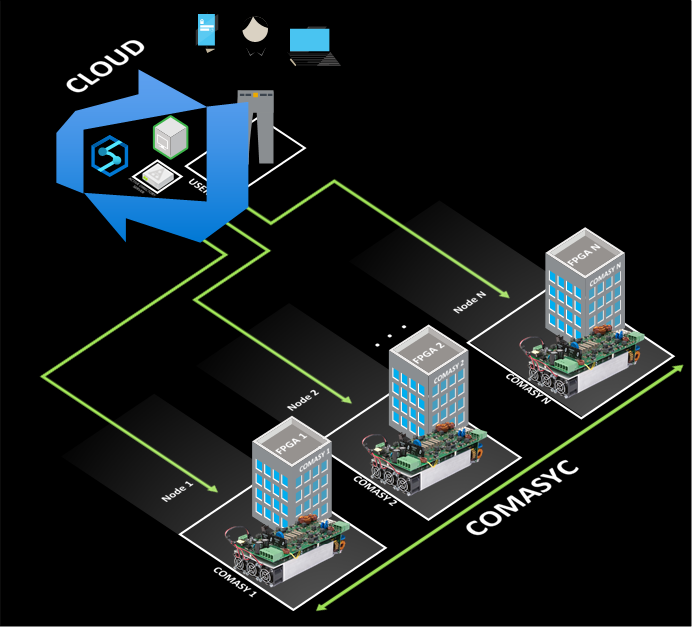

Converter management system connected to the cloud (COMASYC)

AS009 »

Energy is a priority for humanity, in that sense, green energies are a necessity to satisfy the energy shortage and preserve the environment. So electronic converters play a key role.

A converter management system connected to the cloud controlled by a FPGA is proposed for applications in industry 4.0. It has capabilities to integrate panels solar arrays (PVs), batteries, loads at the same time with different converters according to user requirements. That means that the proposed system manages modulation, algorithms, collect information, and distribute energy.

For example, if the user requires a PV, then the P&O MPPPT algorithm is implemented to obtain the maximum energy in the PVs.

On the other hand, parameters such as energy consumption and production of each node are important for the redistribution of energy, for this reason, this model has a single brain that is connected to each node. So, a novel control is proposed based on load balancing managed by the cloud for high-priority requests. The cloud is the single brain that controls the system and organizes the flow power among nodes. The project is focused on the Peruvian reality for places where energy has to be a priority.

Autonomous Vehicles

Autonomous Vehicles

Drone package delivery safety in turbulent atomospheric conditions in confined areas like cities

AS038 »

We believe the wide adoption of drones to replace current CO2 emitting delivery vans will contribute to a significant reduction of carbon additions to the atmosphere. However, if drones are not able to be adopted safely this valuable reduction of carbon emissions will not be realized. Our project combines the technologies of an FPGA, embedded processor, analog sensors and cloud communications to enable the wide and safe adoption of drones to replace traditional CO2 emitting delivery systems.

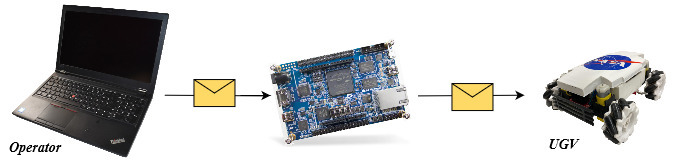

We will demonstrate an FPGA + Processor + Sensor technology that enables a scout drone to detect atmospheric upsets such as turbulence generated around buildings in windy conditions or city thermals. Atmospheric upsets of drones can cause drone loss of control (LOC), collisions between drones, and collisions with buildings or people. The scout demonstration will send near real-time turbulence location data via the cloud to cargo drones to ensure safe delivery of packages with a reduced hazard to third parties.

The key technology of turbulence and upset detection for prevention of LOC has already been developed by Foale Aerospace Inc and has been developed as a solar powered sensor system that can be attached to flying vehicles without aircraft wiring or integration. We were awarded 3rd prize by the Experimental Aircraft Association Founders Prize competition to produce solutions to prevent Loss of Control, by expert judges at Air Venture 2021 at Oshkosh, Wisconsin.

We propose to add FPGA signal processing to improve performance, reduce detection times and reduce false positive signals from our system. We propose a cloud interface will allow near real-time (a few seconds latency) hazardous conditions detected by a light scout drone to change the flight path of a cargo drone and prevent a hazardous or unsafe outcome.

We have experience in Verilog, Quartus and Modelsim targeting a Terasic DE0-Nano with a Raspberry Pi Zero processor interface written in Python, as well as Yosys/Arachne-pnr/IceStorm toolchain for Trenz Icezero iCE40 FPGA. We have 30 years of programming experience with C and C++. We will use this experience to breadboard a flight system onto the Terasic-Intel-Analog Devices-Microsoft InnovateFPGA platform. Real flight data recorded during light drone aircraft flights in turbulent and calm conditions will be used to demonstrate the identification of atmospheric conditions that cause aircraft upsets using the InnovateFPGA platform as if it were mounted on a scout drone. Communication hazard bulletins via the cloud to the cargo drone will be demonstrated via a wi-fi link to a raspberry pi based processor mounted on a wheeled rover, to demonstrate hazard bulletin reception and responsive action by a cargo drone in flight.

Food Related

Food Related

ManGO!

AS019 »

IOT system for mango preservation using Microsoft Azure and FPGAs.

Data Management

Data Management

Hydroponic Harvest Information Infrastructure

AS020 »

A controlled environment minimizes the weather crop dependency, consequently, the hydroponic greenhouses increase the harvest quality and water management. Despite the benefits, this kind of crops requires a better knowledge in physiology and vegetal nutrition to understand the nutritional balance in order to implement chemical corrections in short-term periods. In addition, it is possible compare similar crops in a distributed way, but this fact does not allow and effective work from the field engineer. If the field engineer has access to crop information and its environment, he can apply preventive and corrective protocols to reduce toxicity damage from an element or improve the plant features for best product obtaining. Researchers would contrast between their results and farmers crops to develop action protocols and enhance vegetal genes. The data handling is the main task in this proposal. To get better workflow, an infrastructure that allow share information for crop analysis while engineer arrive and act will be implemented. In one hand, the main station, composed by DE10-Nano and signal conditioners, brings and interface between the user and cloud services to upload crop data. In the other hand, the cloud services allow remote interaction between the engineer and the crop without presential stand.

The station would use the FPGA to control the data acquisition and flow while the HPS monitors and interfaces the data with cloud services. The signal conditioners reduce the acquisition challenges for the pH, conductivity, relative humidity, light intensity, etc. From the sensors, the cloud services allow the storage, interpretation and provides Machine Learning tools to improve the information meaning.

Other: Disaster Prevention

Other: Disaster Prevention

Early Warning Alert System for Forest Fires

AS030 »

We are proposing a low-cost and low power wide-area sensor network that will quickly alert the authorities in case of a forest fire. The project will utilize a mesh network of low-power LoRa transmitter nodes connected to Temperature sensors. The network will be inactive until the temperature at any one node goes above a programmable specified limit, in which case the particular node will send an alert to the FPGA through the network. The FPGA will decide if the alert is real based on fire data modeling of the sensors and alert the authorities with the exact location of the fire.

Water Related

Water Related

River Guardian

AS039 »

The high level of river pollution in industrial and metropolitan environments negatively impacts the ecosystem and raises the government's cost of maintenance and cleaning of those areas. A significant number of rivers, though, are never or rarely cleaned since there is not enough data about their pollution level, nor where the garbage foci are. Hence, a significant portion of all the river waste worldwide is never discovered. It remains unattended, increasing environmental degradation and furthering the impunity of bad actors that pollute rivers without having their actions put to judgment.

We propose a river waste monitoring system composed of an UAV equipped with an image capturing and processing device based on a FPGA. The proposed system also includes support stations with solar panels that will send the collected data to a cloud application while also sending data back to the UAV about its energy status.

The UAV will be capable of flying over the river waters and the riverbank in search of waste. It will also have a small compartment onboard, that will be used to collect water samples, which will then be sent back to a support station for testing.

The support stations will aid the UAVs by collecting solar energy to charge them and analyzing the water collected by the UAVs.

The cloud application will have a dashboard that will display the garbage accumulation spots on a map alongside pictures of the trash and historical data.

The FPGA is an essential part of the system because it will provide the computing power and precision needed for the obstacle and waste recognition algorithm to work in real-time while also being energy-efficient.

The project's expected outcome is to have a relatively low-cost, self-sustainable autonomous system that can be easily deployed on various rivers and efficiently map the river and riverbank area for garbage accumulation spots while also assessing the water quality. This system will provide the local government and agencies with real-time and detailed data about the river's health and waste accumulation spots. Furthermore, the data gathered will be valuable in policies and efforts to restore the river's condition and educate the local community about correct waste disposal practices and other ways of ensuring the nearby river's well-being.

Other

Other

Reco-LWC: Reconfigurable Lightweight Crypto for IoT applications

AS005 »

NIST announced the finalist who are participating the Lightweight Cryptographic competition for securing small devices which is targeting Internet of Things applications. We are planning to build a reconfigurable processor which runs all of the 10 candidates on FPGA using dynamic reconfiguration. The proposed processor will be evaluated using DE10-Nano Cyclone V SoC FPGA Board

and also Microsoft Azure IoT on both software and hardware perspectives

Smart City

Smart City

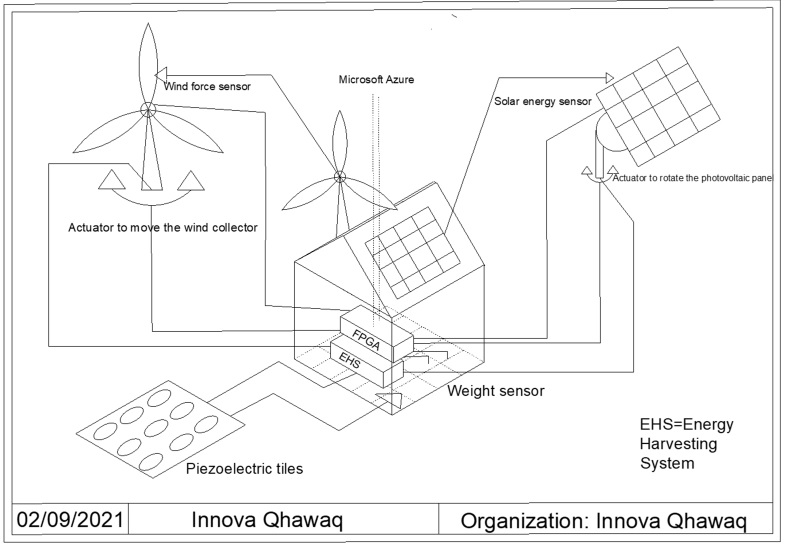

Innova Qhawaq

AS012 »

Our project has as its main concept, the maximum use of energy through the efficient obtaining of renewable sources within the home, from photovoltaic energy through solar panels that move intelligently through neural networks that seek the maximum use of sunlight, harvesting energy obtained from strategic places such as doors, windows and the floor; and wind energy obtained efficiently through artificial intelligence and adapted to the environment. Through the use of the D10-nano FPGA as a data processing center where the information obtained from the sensors of these energy sources sends signals to actuators so that the energy is obtained more efficiently through the exact orientation where it is collected. as much energy as possible, making the fpga work as a decision-making center to control these actuators, and also by using data storage intelligently in the cloud, neural networks will be used to optimize energy collection from sources and optimize the use of resources.

Other: AI

Other: AI

Jaguar

AS023 »

"Softbank expects ARM to deliver 1 trillion IoT chips in the next 20 years." (Reuters, 2017)

Since AI is considered as an emerging technology by many companies and research institutes in the world because of its performance and accuracy, the number of AI chips and relevant systems are increased exponentially as Softbank already expected in 2017. With this kind of technical evaluation, it is expected that AI systems will help people to make their life richer than in the past because of its versatile functions and better performance than human-being.

However, people are also faced with side effects of AI systems' exponential increment because recently many researchers found that AI inference and training sequences can generate numerous CO2s. For example, training a single AI model can generate 626,000 pounds of carbon dioxide from relevant operations. This amount is five times bigger than a car. (MIT Technology Review, 2019)

For that reason, TinyML with lower-power and high-performance features is emerging as a replacement of ultra-scale AI systems.

To contribute to maintaining sustainability for the next generation, we will focus on designing low-power and high-performance TinyML accelerator with minimal hardware resources and energy consumption. To realize this goal, we will use Intel FPGA-based IoT device kits, self-developed hardware architecture and optimized software stacks for covering AI full stacks and contributing to CO2 reduction with low-power features for global sustainability.

Smart City

Smart City

Coprocessor-based Antivirus in Order to Detect Malware Preventively

AS025 »

Distinct metamodels of Smart Cities have been developed in order to solve the problems of cities in relation to different socioeconomic indicators. In view of the foregoing, information security plays an important role in guaranteeing human rights in contemporary society. From an infection by malware (malicious + software), a person or institution can suffer irrecoverable losses. As for a person, bank passwords, social networks, intimate photos or videos can be shared across the world wide web, which will affect finances, dignity and mental health. On the other side, an institution may have its vital data inaccessible and/or information from its respective customers and employees stolen. In synthesis, the theft of intimate information can lead to cases of depression, suicide and other mental disorders.

The proposed work investigates 86 commercial antiviruses. About 17% of the antiviruses did not recognize the existence of the malicious samples analyzed. Commercial antiviruses, which, even with billionaire revenue, have low effectiveness and have been criticized by incident researchers for more than a decade. Commercial antiviruses performance is based on signatures when the suspect executable is compared to a blacklist made from previous reports (and this requires that there have already had victims). Blacklists are assumed to be effectively null by the current worldwide rate of creation of virtual pests, that is 8 (eight) new malwares per second. We concluded that malwares have the ability to deceive antiviruses and other cyber-surveillance mechanisms

In order to overcome the limitations of commercial antiviruses, this project creates a core processor-based antivirus able to identify the modus operandi of a malware application before it is even executed by the user. So, our goal is to propose an antivirus, endowed with artificial intelligence, able to identify malwares through models based on fast training and high-performance neural networks. Our core processor-based antivirus is equipped with an authorial Extreme Learning Machine.

Our processor achieves an average accuracy of 99.80% in the discrimination between benign and malware executables. Preliminary results indicate that the authorial Coprocessor, built on FPGA, can speed up the response time of the proposed antivirus by about 4765 times compared to the CPU implementation employing the same FPGA. Thus, the malicious intent of the malware is preemptively detected even when executed on a slow (low processing power) device. Our antivirus enables high performance, large capacity of parallelism, and simple, low-power architecture with low power consumption. We concluded that our solution assists the main requirements for the proper operation and confection of an antivirus in hardware.

Health

Health

Field Programmable Health

AS026 »

FPGAs have the ability to enhance electromedical applications, including patient monitoring, ventilation, and other life sustainment applications. By using FPGAs and association analog connectivity devices, the provision of such electromedical care can be distributed and reduce the need for concentrated services and their associated transportation and other costs. This project seeks to apply FPGAs to these applications by exploiting integrated sensors and compute capability.

Smart City

Smart City

Smart Trashcan

AS034 »

The practice of recycling helps reduce pollution, greenhouse gas emissions, and the amount of waste that is disposed in landfills. The majority of Americans unfortunately does not embrace the three Rs (reduce, reuse, recycle). The lack of adequate knowledge for sorting and recycling materials is one of the biggest barriers to being green. Recycling is a behavior that can be improved through technology. This proposed project is centered around the creation of a smart trash can prototype designed to create awareness among students at Queens University and in its neighboring community on the importance of correctly sorting waste items. The smart-trash can has both a hardware and a software component. The project will specifically focused on developing a working prototype and deep learning (DL) model using the Intel FPGA Cloud Connectivity kit in combination with Microsoft Azure IOT. The model is able to correctly classify different types of disposable and recyclable food service items (paper cups, paper boxes, paper trays, food containers, etc.) commonly found in the Queens University’s cafeteria and around campus. The classification is used by the hardware to provide a visual prompt to indicate the bin for a particular waste item. This can lead to improving the process of pre-sorting recyclable materials once the smart trash can is fully deployed on campus.